HCIP-Intelligent Vision V1.0 Questions and Answers

Before deploying an IVS3800, you'll need to determine the network segment and IP address range of each network plane using the IVS network design tool. Which of the following statements about network design and planning are false?

Options:

You are recommended not to change the network segment and IP address range of Outer_Service. Otherwise, installation operations and capacity expansion may fail.

You are recommended not to change the network segment and IP address range of Inner_Service. Otherwise, installation operations and capacity expansion may fail.

You are recommended not to change the network segment and IP address range of Outer_OM. Otherwise, installation operations and capacity expansion may fail.

You are recommended not to change the network segment and IP address range of InnerJDM. Otherwise, installation operations and capacity expansion may fail.

Answer:

DExplanation:

The correct answer is D because the statement itself is inconsistent with the standard IVS3800 plane naming logic. In IVS deployment, network design is performed per network plane , and the material distinguishes service and management connectivity, for example by referencing service TOR and management TOR , and by using terms such as external service IP address and external management floating IP address for upper-layer connection planning . This clearly supports standard plane naming such as Outer_Service , Inner_Service , and Outer_OM .

The option D uses “InnerJDM” , which does not match the naming pattern established by the rest of the network design terminology. In professional IVS planning, this is most likely a typographical corruption of Inner_OM . Since the question asks which statement is false, the incorrect plane name makes D the false statement as written. The other options are structurally consistent with IVS network-plane design principles, where core predefined service and OM address ranges are typically kept unchanged to avoid deployment and expansion issues. Therefore, D is the false statement.

Which of the following are cloud-edge synergy scenarios of Huawei JVS platforms?

Options:

The upper-level platform can deliver vehicle alert tasks to a lower-level platform.

Multiple cameras can collaborate with each other to recognize the same image.

The upper-level platform can centrally load, upgrade, and replace algorithms of both upper- and lower-level platforms.

The upper-level platform can apply for resources from a lower-level platform to perform intelligent analysis on video and images.

Answer:

A, C, DExplanation:

The correct answers are A, C, and D because cloud-edge synergy is defined as collaboration between a central platform and lightweight edges or other upper- and lower-level cloud domains. The material states that “Cloud-edge synergy: collaboration between the central platform and lightweight edges or other upper- and lower-level domains deployed on clouds” while separately identifying “Multi-camera Collaboration: Multiple cameras collaborate to identify same image” . This distinction is decisive: option B belongs to multi-camera collaboration, not cloud-edge synergy.

In practical JVS deployment, cloud-edge synergy includes centralized task orchestration, algorithm lifecycle management, and cross-domain resource coordination. That is why delivering vehicle alert tasks downward to lower-level platforms fits the model, and why centrally loading, upgrading, or replacing algorithms on upper- and lower-level platforms also fits. Likewise, when the upper-level platform applies for edge-side resources to perform intelligent analysis on video or images, it is using distributed resource collaboration, which is a classic cloud-edge function. Option B is excluded because it describes camera-to-camera cooperative recognition rather than platform-to-edge coordination. Therefore, the valid cloud-edge synergy scenarios are A, C, and D .

Which of the following tasks need to be completed before pole erection and setting during site installation?

Options:

Install lithium batteries

Install a lightning rod

Adjust the camera's shooting angle

Connect the ground cable of the function module to the ground bar

Answer:

BExplanation:

The correct answer is B . The training content identifies pole erection as a distinct step and separately highlights a prerequisite safety point that “The lightning rod must be secured” . This indicates that the lightning rod installation is treated as a preparation requirement associated with the pole before or during erection safety control, rather than as a later commissioning activity.

By contrast, the other options clearly belong to later installation phases. Lithium batteries are part of the pole-mounted functional equipment and are installed after the pole structure is already in place. Camera shooting angle adjustment is a post-installation alignment task performed only after the camera has been mounted. Connecting the ground cable of the function module to the ground bar is also a module-level electrical integration task that depends on the module and pole already being installed. In actual outdoor intelligent vision deployment, structural safety and lightning protection are handled first because they affect lifting, erection, and long-term site protection. Therefore, among the given options, the task that must be completed before pole erection and setting is installing the lightning rod .

To prevent light reflection, strobe lights are installed on both sides of the checkpoint camera, are more than 2 m away from the camera, and compensate light for nearby lanes.

Options:

TRUE

FALSE

Answer:

BExplanation:

The correct answer is B. FALSE . The installation guidance for strobe lights in the material focuses on orientation and illumination angle , not on a fixed rule that they must be installed on both sides of the checkpoint camera and more than 2 meters away. The document states that “Strobe light: provides light compensation at night to clearly capture license plates and pedestrians” and further emphasizes that “The strobe light and flash light cannot be installed upside down. The cable outlet must be vertically at the bottom” and “It is recommended that a certain angle be formed between the strobe light or flash light and the lane to avoid excessive exposure of license plates caused by direct illumination”

This means the critical design requirement is to avoid direct reflective glare and overexposure by controlling the installation angle relative to the lane. The statement in the question adds rigid conditions such as both sides installation and a distance of more than 2 m , but those are not established in the cited training guidance. Since the PDF does not define those conditions as universal requirements, the statement is inaccurate as written.

Which of the following are included in the routine check of the CSP?

Options:

License status

CPU and memory usage

Cluster running status

CSP and container running status

Answer:

A, B, C, DExplanation:

The Cloud Service Platform (CSP) is the underlying infrastructure that manages containers, resources, and services for the intelligent vision platform. Routine maintenance and health checks are essential to ensure the continuous availability of video surveillance services. A comprehensive routine check of the CSP encompasses multiple layers of the system architecture.

First, the License status must be verified to ensure that all intelligent functions and channel capacities remain authorized and active. Second, monitoring CPU and memory usage is critical to identify potential resource bottlenecks that could lead to service instability or video stuttering. Third, for systems deployed in high-availability environments, the Cluster running status (including two-node cluster heartbeat and synchronization) must be checked to ensure failover capabilities are intact. Finally, since the platform utilizes a microservice architecture, checking the CSP and container running status is mandatory to verify that all functional modules (like the VCNAPI or BMU) are active and isolated as intended. By performing these checks regularly, administrators can proactively address issues before they trigger critical system alarms or data loss.

Which of the following is not an ONVIF standard?

Options:

Profile B

Profile T

Profile A

Profile S

Answer:

AExplanation:

The Open Network Video Interface Forum (ONVIF) provides a standardized framework for the interoperability of IP-based physical security products. These standards are organized into specific "Profiles" that define the features supported by devices and clients. Standard interfaces such as ONVIF are used to connect cameras to the IVS platform, facilitating a future-proof ecosystem where hardware from different vendors can communicate seamlessly.

Among the recognized standards, Profile S is the most common, used for basic video streaming and configuration. Profile T is a more modern standard designed for advanced video streaming, specifically supporting H.265 encoding and metadata reporting. Profile A is utilized for broader access control configurations, allowing for the integration of security management systems. Other profiles include Profile G for edge storage and Profile Q for quick installation. However, Profile B is not a recognized ONVIF standard profile. Adhering to these standardized formats ensures that recording streams can be accessed through external interfaces and that the system provides a channel for reporting metadata (structured data) for exchange with the platform, regardless of the underlying hardware differences.

On a Lite Edge network, service TOR switches need to support the static LACP mode, and eth-trunk needs to be configured on the switches.

Options:

TRUE

FALSE

Answer:

AExplanation:

The correct answer is A. TRUE . In IVS3800 and Lite Edge network construction, the service plane relies on TOR switching for high-bandwidth service traffic, and link bundling is a standard design approach to provide bandwidth aggregation and redundancy. The material identifies the service-plane access layer explicitly: “Service TOR: TOR access switch on the service plane. The 10 GE optical port or GE port on the service plane is connected to the switch. CE6800 series switches are recommended” . This directly confirms that service TOR switches are a required part of the service-plane topology.

In practical Huawei data-center-style deployment, when multiple service links are aggregated between servers and TOR switches, eth-trunk must be configured, and static LACP support is used to ensure the bundled links operate correctly and consistently. That requirement aligns with standard network design logic for service-plane access in container-based edge environments, where stability and predictable uplink behavior are essential. Because Lite Edge depends on reliable service-plane connectivity and TOR-based aggregation, the statement that service TOR switches need to support static LACP mode and require eth-trunk configuration is correct.

Which of the following is not a prerequisite for high-density target capture?

Options:

High-frequency exposure

Excellent target detection algorithm

Large memory

Chips with high computing power

Answer:

AExplanation:

High-density target capture is a specialized capability designed for scenarios with high pedestrian traffic, such as urban squares or station exits, where crowd density can exceed 100 person-times in a single frame. This feature ensures accurate trajectory generation and optimal snapshots without missed or false captures in challenging environments. The technical architecture of this feature is built upon three fundamental pillars, as the system requires that high-density target capture enabled by: object detection algorithm and target trajectory generation algorithm + chip computing power + large-capacity memory .

Hardware requirements are particularly stringent, necessitating a professional AI chip, such as a 4 TOPS NPU, to manage the processing load of tracking up to 200 targets per frame. Large- capacity memory, typically 4 GB of DDR, is also vital to ensure an ultra-low snapshot repetition rate of less than 8%. While high-frequency exposure—specifically T-Shot double-exposure technology—is an advanced imaging technique used to capture clear snapshots of vehicles and targets simultaneously at night, it is a distinct imaging enhancement rather than a prerequisite for the high-density capture logic itself. High-frequency exposure focuses on resolving illumination and motion blur, whereas high-density capture focuses on the computational capacity to detect and track a massive volume of objects.

Huawei IVS platform can connect to cameras of a vendor only after the required access plug-in is installed on the platform.

Options:

TRUE

FALSE

Answer:

AExplanation:

The Huawei Intelligent Video Surveillance (IVS) platform is designed with a high degree of openness and compatibility, allowing it to manage a vast array of front-end devices from various third-party vendors. To facilitate this interoperability, the platform utilizes a specialized driver or plug-in architecture. While standard protocols like ONVIF, GB/T 28181, and SDKs provide a baseline for communication, many proprietary features and vendor-specific protocols require a dedicated access plug-in to be installed on the platform side.

These plug-ins act as a translation layer that maps the third-party camera's private commands and data structures to the IVS platform's unified internal management language. This is particularly important for synchronization functions and interconnection collection, which are often handled by the VDS (Video Data Service) component. By installing the correct vendor-specific plug-in, the platform can access advanced camera features such as PTZ control, alarm reporting, and parameter configuration that might not be fully supported by generic standards. This modular approach allows Huawei's intelligent vision system to remain flexible and future-proof, enabling rapid integration of new camera models and vendors without requiring a full system-wide firmware upgrade.

Both intelligent cameras and Huawei intelligent analysis platforms can conduct video data structuring.

Options:

TRUE

FALSE

Answer:

AExplanation:

Video structuring is the essential process of converting unstructured video content into searchable, organized data. This capability is integrated across the Huawei Intelligent Vision architecture, existing at both the front-end (cameras) and the back-end (platforms). Huawei's Software-Defined Cameras (SDC) are equipped with professional AI chips, such as the Ascend 310 or Hi3559A, which provide the high-performance processing required to perform this task locally. These AI chips can even replace backend servers to structure full video features, maximizing network-wide intelligent analysis efficiency. By performing structuring at the edge, the system reduces the bandwidth required to transmit full video streams to the central cloud while maintaining real-time awareness.

Simultaneously, Huawei’s intelligent video cloud and big data platforms provide robust back-end structuring capabilities for historical or live video analysis. These platforms utilize advanced algorithms to automatically generate structured data from massive video feeds. As noted in the documentation, video search intelligently analyzes unstructured original video data and automatically generates structured data based on the proper algorithms. This dual-layer approach allows for flexible deployment depending on whether real-time edge processing or high-volume centralized analysis is required for a specific security scenario.

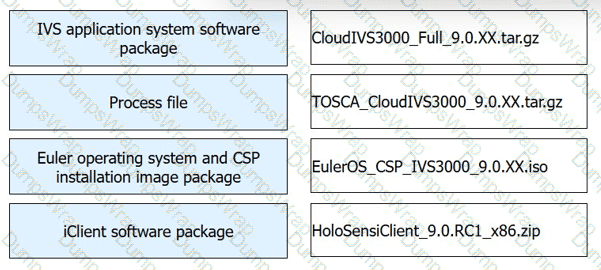

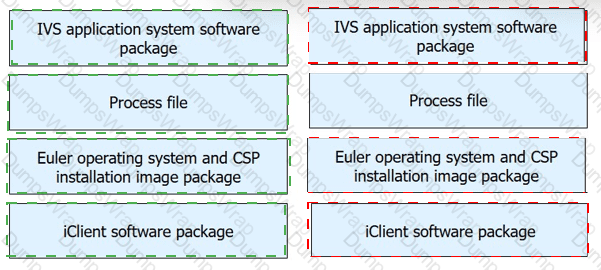

Match the following IVS3800 software packages with their most appropriate description.

Options:

Answer:

Explanation:

IVS application system software package → CloudIVS3000_Full_9.0.XX.tar.gz

Process file → TOSCA_CloudIVS3000_9.0.XX.tar.gz

Euler operating system and CSP installation image package → EulerOS_CSP_IVS3000_9.0.XX.iso

iClient software package → HoloSensiClient_9.0.RC1_x86.zip

The matching is determined by standard Huawei software package naming conventions used for IVS3800 and CloudIVS3000 deployment. The package named CloudIVS3000_Full_9.0.XX.tar.gz is the full IVS application system software package , because the keyword Full indicates the complete application deployment content. The TOSCA_CloudIVS3000_9.0.XX.tar.gz file corresponds to the process file , since TOSCA is used for orchestration and deployment workflow definition in Huawei platform installation. The EulerOS_CSP_IVS3000_9.0.XX.iso package is clearly the Euler operating system and CSP installation image package , as both EulerOS and CSP are explicitly named in the file. Finally, HoloSensiClient_9.0.RC1_x86.zip is the iClient software package , because HoloSens iClient is Huawei’s client-side operation and management application for the platform.

This mapping also follows the practical installation sequence. Engineers first prepare the operating system and CSP base image, then use the process file for orchestration, deploy the IVS application package, and finally install the iClient for platform access and configuration.

If artifacts or video stuttering occur on live video due to unstable network conditions, you can enable the network adaptation function in the camera web system. After this function is enabled, the camera can adjust the bit rate automatically based on the onsite network status to ensure smooth live video.

Options:

Answer:

network adaptation

Explanation:

The correct answer is network adaptation . This function is used when live video quality is affected by unstable bandwidth, packet loss, or fluctuating network throughput. In that situation, the camera dynamically adjusts the stream bit rate to match current network conditions so that video transmission remains stable and smooth. The key logic in the question is the phrase that the camera can “adjust the bit rate automatically based on the onsite network status, to ensure smooth live video” , which directly corresponds to adaptive network-side stream control rather than image tuning, PTZ control, or exposure optimization.

From a technical perspective, artifacts and stuttering during live video are often caused by transport limitations rather than by optics or encoding settings alone. Huawei’s live-view framework depends on continuous stream delivery, decoding, and display on the specified window . If the network becomes unstable, fixed-rate transmission may overload available bandwidth. Enabling network adaptation allows the camera to reduce or optimize the bit rate in real time, which improves continuity and reduces playback interruptions. Therefore, network adaptation is the correct function to enable.

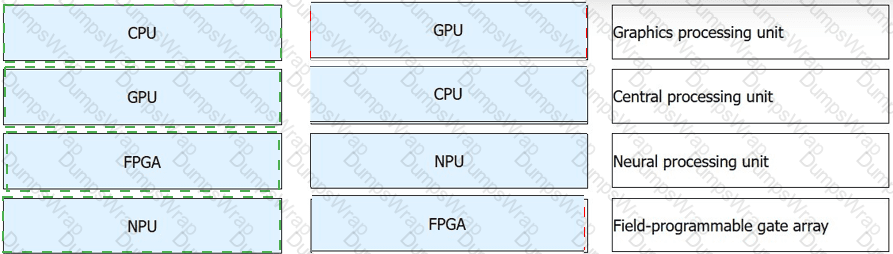

Processors are important to the Intelligent Vision system, and different processors have different functions. Link the following processor types to the corresponding description.

Options:

Answer:

Explanation:

CPU → Central processing unit

GPU → Graphics processing unit

FPGA → Field-programmable gate array

NPU → Neural processing unit

The correct matching is based on the standard definitions of the four processor types used in intelligent vision architecture. A CPU is the central processing unit , which handles general-purpose control, logic execution, and system coordination. A GPU is the graphics processing unit , originally designed for graphics rendering but now widely used for parallel computing tasks. An FPGA is a field-programmable gate array , which can be reconfigured for specific hardware logic functions and is valuable in acceleration and low-latency processing scenarios. An NPU is a neural processing unit , specialized for AI inference and deep-learning operations.

In intelligent vision systems, these processors serve different but complementary roles. The CPU manages operating control and service orchestration. The GPU accelerates highly parallel workloads. The FPGA supports custom hardware logic and fast pipeline processing. The NPU is especially important for AI-based vision services because it is optimized for neural-network computation, target recognition, and inference efficiency. This processor division is fundamental in intelligent camera, edge, and cloud architecture, where workload specialization improves overall system performance and intelligence capability.

Which of the following statements is true about the video site planning and design process?

Options:

Video site selection planning-Video site survey-Video site device selection planning

Video site survey-Video site selection planning-Video site device selection planning

Video site device selection planning-Video site selection planning-Video site survey

None of the above

Answer:

AExplanation:

The correct answer is A because video site planning follows a logical engineering sequence: first determine where the site should be deployed, then perform a detailed site survey , and finally complete device selection planning based on the confirmed environmental and construction conditions. The material first lists typical site selection targets such as “Road intersections and entrances and exits” and “Key areas in cities” , which clearly corresponds to site selection planning. It then moves into survey items such as “Environment survey” , “Power supply” , and “Construction conditions” , showing that the survey is performed after the preliminary site location is determined and before final device planning is completed

This sequence is consistent with actual project practice. A project team cannot choose appropriate poles, anti-corrosion levels, cameras, power schemes, or transmission methods until the physical environment has been checked. For example, the survey determines whether the site is near the sea, whether mains power is stable, and whether enough construction space is available. Those findings directly affect the final device model and solution design. Therefore, the correct process is video site selection planning → video site survey → video site device selection planning .

During live video viewing, you should avoid superimposing the video pane playing live video with the window or dialog box of another program. Otherwise, artifacts or video stuttering may occur.

Options:

TRUE

FALSE

Answer:

AExplanation:

During the commissioning and operation of video surveillance clients, such as the iClient, maintaining optimal playback performance is vital for accurate observation. A common technical recommendation is to avoid superimposing the video pane playing live video with the window or dialog box of another program . This is because the video decoding and rendering process on a PC involves high-frequency data transfer between the CPU/GPU and the screen buffer. When another application's window is placed over the active video pane, the computer's graphics subsystem must constantly recalculate which pixels to display, often leading to artifacts or video stuttering .

This phenomenon is especially prevalent when viewing high-resolution streams (such as 4K or 1080p) or when the client is handling multiple concurrent video windows. Overlapping windows can disrupt the hardware acceleration pathways, forcing the system to rely on software rendering, which significantly increases latency. To ensure a smooth "UHD video viewing" experience and to maintain the integrity of the visual data—which is necessary for identifying targets or verifying alarms—users should keep the video interface clear of obstructions. This best practice ensures that the frames are rendered sequentially and clearly, preventing the loss of critical detail during real-time monitoring.

The system determines whether an object crosses the tripwire based on which of the following criteria?

Options:

Whether an object moves fast.

Whether an object crosses the tripwire in the specified direction.

Whether an object's movement trajectory intersects with the tripwire.

Whether an object is moving.

Answer:

CExplanation:

The correct answer is C because tripwire crossing detection is fundamentally based on analyzing the movement trajectory of an object relative to a predefined virtual line. In intelligent vision systems, the object is first detected, then tracked frame by frame, and its centroid positions are recorded to form a trajectory. The material explains that “A movement trajectory consists of positions of the centroid of a moving object in individual frames” and also states that “To accurately predict whether an object is likely to cross the tripwire, features need to be extracted from the object… Dynamic features: trajectory, speed, and direction. Tripwire crossing detection mainly involves the extraction of dynamic features of an object.”

This means the decisive event is whether the tracked path intersects the configured tripwire. Speed alone does not determine crossing, and simply being in motion is insufficient. Direction can be an additional filtering condition in some deployments, but the actual crossing judgment is established through trajectory analysis. Therefore, the system concludes that an object has crossed the tripwire when its motion trajectory intersects the tripwire line, making C the most accurate answer.

When installing the behavior analysis, video synopsis, or video search algorithm, you only need to install the VA algorithm but do not need to install the MCS algorithm.

Options:

TRUE

FALSE

Answer:

BExplanation:

The correct answer is B. FALSE . Behavior analysis, video synopsis, and video search are not isolated single-function outputs. They are built on a chain of capabilities that includes moving-object extraction, trajectory processing, segmentation, indexing, and structured retrieval. The material shows this dependency very clearly. For synopsis, it states that “Moving object detection involves algorithms such as background modeling, scene segmentation, and moving object extraction” , then “Object trajectory extraction involves object tracking algorithms” , and finally the system “Generate[s] a compact video” by fusing trajectories and background frames . For video search, the document further explains that indexed features and structured search conditions are required to browse and retrieve target information efficiently .

Because these services involve both intelligent analysis and media-content handling or structured-search capability, treating them as requiring only the VA algorithm is too narrow. In practical deployment, the MCS-related capability is also needed to support the complete algorithm service chain. Therefore, the statement that only the VA algorithm is required is false.

A protection line is set up to record the location from which an object moves in or out of an area via monitoring methods.

Options:

TRUE

FALSE

Answer:

AExplanation:

The correct answer is A. TRUE . In intelligent vision and site planning, a protection line is a boundary-type monitoring rule used to determine whether a target enters or exits a defined space. This is consistent with the motion-analysis logic in the material, where behavior judgment is based on trajectories rather than isolated frames. The document explains that “A movement trajectory consists of positions of the centroid of a moving object in individual frames” and further states that “Tripwire crossing detection mainly involves the extraction of dynamic features of an object” such as trajectory, speed, and direction .

From an engineering viewpoint, a protection line is essentially a virtual line used to determine crossing behavior at the edge of a monitored zone. Once the moving object’s trajectory intersects that defined line, the system can judge whether the target is entering or leaving the designated area. This makes the protection line useful for event rules such as entry detection, exit detection, and boundary protection. Because the statement accurately reflects how a protection line records in-and-out movement locations using monitoring analysis, it is true.